Deviant Standards

In Range

column By: Terry Wieland | August, 18

Handloaders are all amateur ballisticians and, by default, amateur statisticians. Professionals of either ilk would laugh at the very idea that any of us is really a ballistician or statistician, and when you read some papers written by these people, you begin to see the difference.

From time to time, publishers of handloading manuals have retained professionals to write chapters on various topics such as velocity measurement, gauging accuracy or measuring load consistency. Almost without exception, they are impenetrable except to other professionals.

From time to time, I get letters from readers asking why we include extreme spread (ES) measurements in our loading tables instead of standard deviation (SD), which is considered by professionals a much more accurate measurement of load consistency. This is a tough question to answer, since I could not explain the actual difference between the two except to mutter “It’s a statistical thing.”

That lack of knowledge bothered me, so I went looking for the answer. In the Speer Reloading Manual No. 11, published in

Ken Oehler was one of the men who made the portable chronograph affordable, and thereby changed the entire nature of handloading. By eliminating much of the guess work involved in load development, he simplified it immensely by providing real numbers. At the same time he complicated it by providing such arcane information as figures for ES and SD.

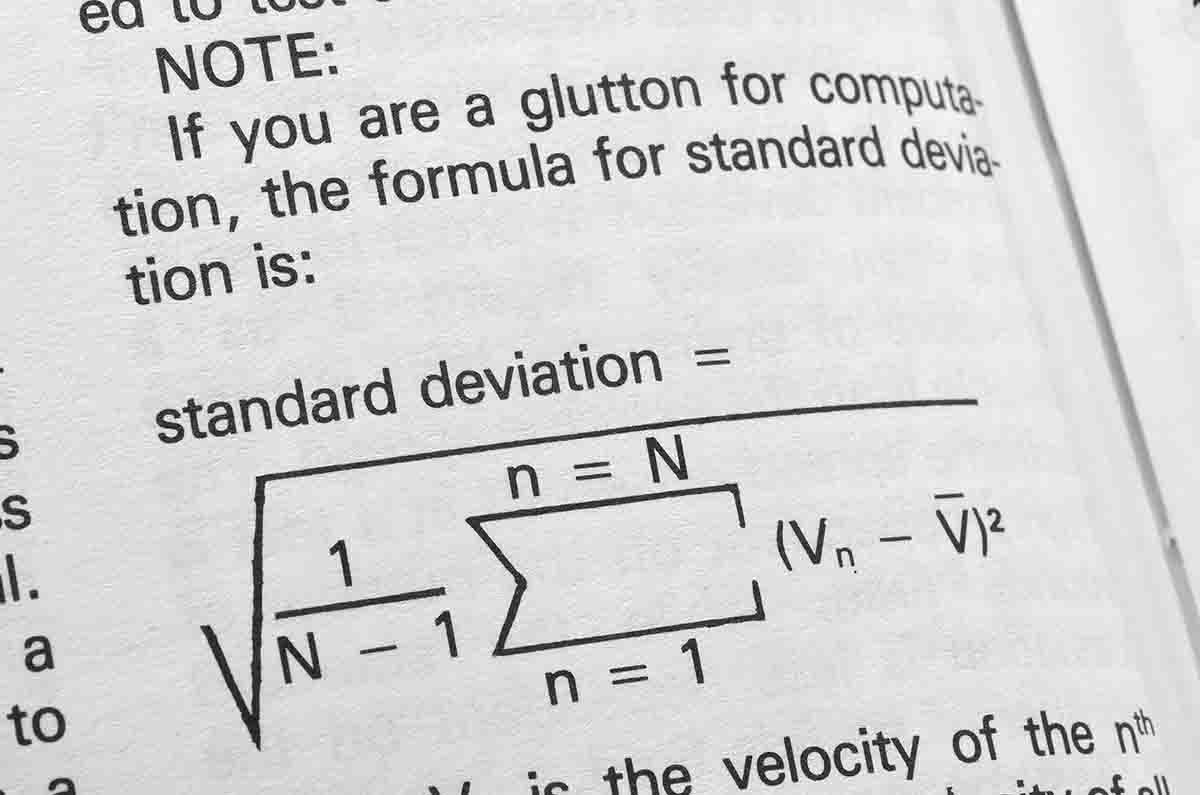

In Speer No. 11, Oehler provided a formula for arriving at standard deviation, and I could not tell you what most of the symbols involved even mean, much less how to use them to calculate anything. However, he was kind enough to add a one-paragraph explanation: “You first find the average velocity, then find the difference between each velocity and the average, square each difference, add all the squares together, divide the sum by one less than the number of rounds, and then take the square root.”

Compare that with the method of arriving at ES, in which you simply subtract the lowest velocity from the highest.

An immediate problem with calculating SD is having a valid average to start with. Oehler dismisses a three-shot average as almost useless in statistical terms. Five shots is better, and 10 is better still. I long ago settled on five shots as more than adequate for my purposes. Even at my grade-school level as a statistician, it quickly became obvious that measuring three shots, like firing a three-shot group for accuracy, leaves a handloader at the mercy of quirks, anomalies and pure chance, and can be very misleading.

Let’s assume, however, that you have a usable average, and you can then calculate SD. Is it worth it?

At the range the other day, I happened to chronograph four different loads for the .32-40 and fired five shots with each. The ES/SD relationships were as follows: 18-7, 49-19, 35-14, 37-14. In percentage terms, standard deviations were (in round numbers) 39, 39, 40, and 38 percent of extreme velocity spread. Statistically, I did not have anything like a large enough sample to draw any conclusions, but for my purposes, it’s enough. I’d be willing to bet that most of the time SD works out to 40 percent of ES, or a little less.

In my opinion, standard deviation is a better measure of uniformity than extreme spread because it is less dependent on the number of rounds fired to be something approaching accurate. In other words, the fewer rounds you include in your test, the better it is to use SD than ES. Even allowing for that, Oehler said three-round tests of uniformity are useless, and five rounds are marginal.

The question then becomes, how useful is it to know this anyway? In theory, the more uniform a load’s performance, the more accuracy you can expect. That’s the theory, and is probably true most of the time, but not always. I have had beautifully uniform loads that, on the target, behaved like a handful of rocks, and loads with wild variations that grouped very well. In the end, the only way to measure accuracy is with holes in paper.

The same is true of velocity. How many times have you encountered a guy claiming 3,200 fps for his favorite handload only to discover that, when it is fired over a chronograph, it is 200 or 300 fps slower?

In the early 1960s, when the team of Homer Powley, Bob Forker and Bob Hutton brought to life the Powley Computer for calculating loads, they included projected velocities with a given load. At best, those can only be an educated guess and, in actual tests, velocities were sometimes several hundred feet per second lower. The later Powley psi calculator proved to have similar quirks, and it predicted pressures that often proved lower than actual.

Today, anyone who can afford a loading press can afford a chronograph, but very few of us own pressure barrels with all the related paraphernalia – and nor would most of us really want to.

Useful as it was at the time, the Powley slide-rule computers became obsolete with the arrival of the digital computer. Now, programs are readily available on

the Internet that will do everything Powley aspired to, and more. One such website, kwk.us, allows you to input data and arrive at the information you need, but also provides notes explaining pitfalls to avoid, as well as correcting some of Powley’s original assumptions that proved less than gilt-edged.

Just for fun, I took some calculations I worked out with the Powley Computer four years ago in Handloader No. 290 (June/July 2014) and tried them again on the Internet computer program. My intention was to report any disagreements between the two. In fact, the disagreements and complications were such that it proved impossible to do in the space available.

Speaking very generally, I found that the original Powley Computer, the Internet program and my own chronograph all disagreed on the velocity from a given load – one load, two different computed velocities, with neither very close to the actual measured result. They disagreed on chamber pressures, too, which I do not have the ability to measure, but based on the above I would not put much faith in either.

What does all this have to do with standard deviation? Just this: With modern chronographs, we now have the ability to measure standard deviations in velocity with a given load, but like many readings of load data, SD is less a measure of something tangible than it is an indication of what might or might not happen.

To get back to the four .32-40 loads mentioned above, on the target board the load with the worst performance in terms of ES and SD delivered the best group, while the lowest extreme spread and standard deviation delivered only the second-best accuracy. That is pretty much in line with notes I have in my loading log regarding different loads, their performance and their extreme spreads.

If I had to make a blanket judgement, I would say that extreme spread and/or standard deviation do not matter one whit unless your goal in life is to record the technically most-uniform load in reloading history. In terms of practical accuracy and game-getting ability, they amount to little more than a distraction.